Early this morning, I experienced a situation that proves just how fragile managing a community is today. Our public VallaBus group, a space of nearly 150 people that took me years of hard work to cultivate and keep alive, was completely banned out of nowhere.

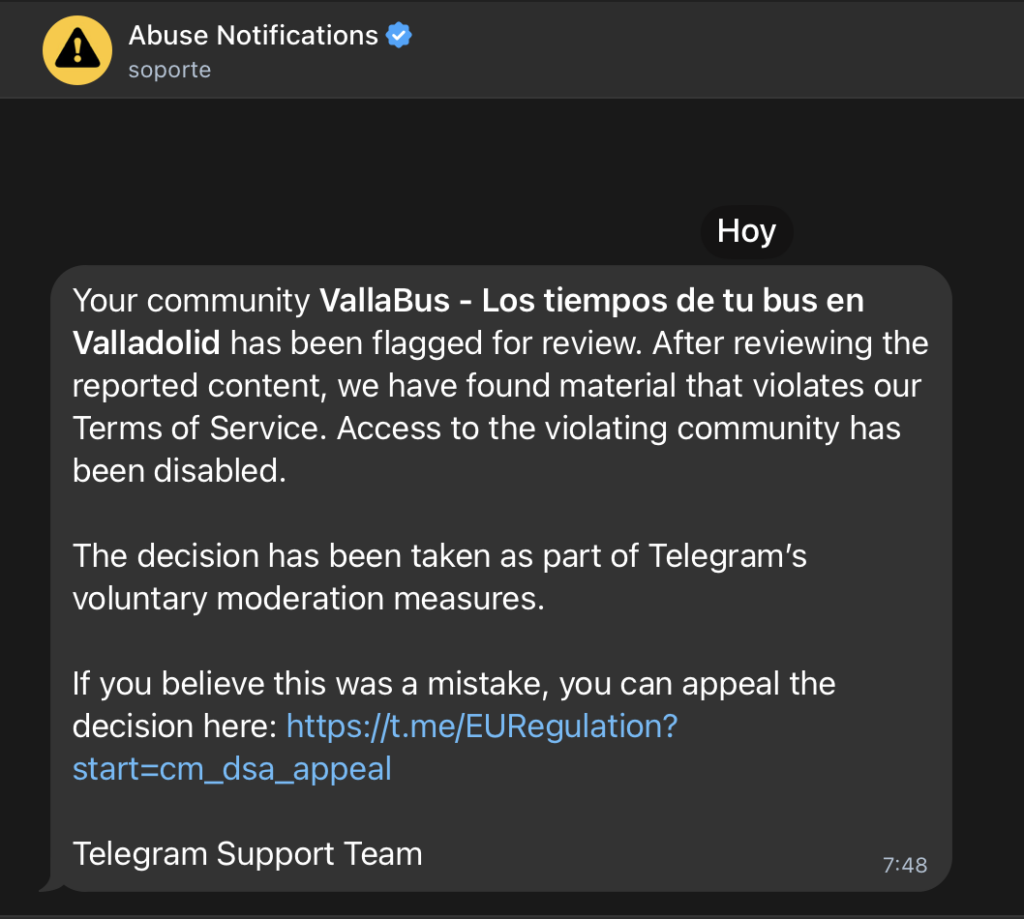

The reason? During the night, a malicious bot managed to join and posted illegal content. As soon as we noticed, we kicked the bot and deleted the message. However, Telegram’s automated system had already taken action: we received a notification of a total group ban.

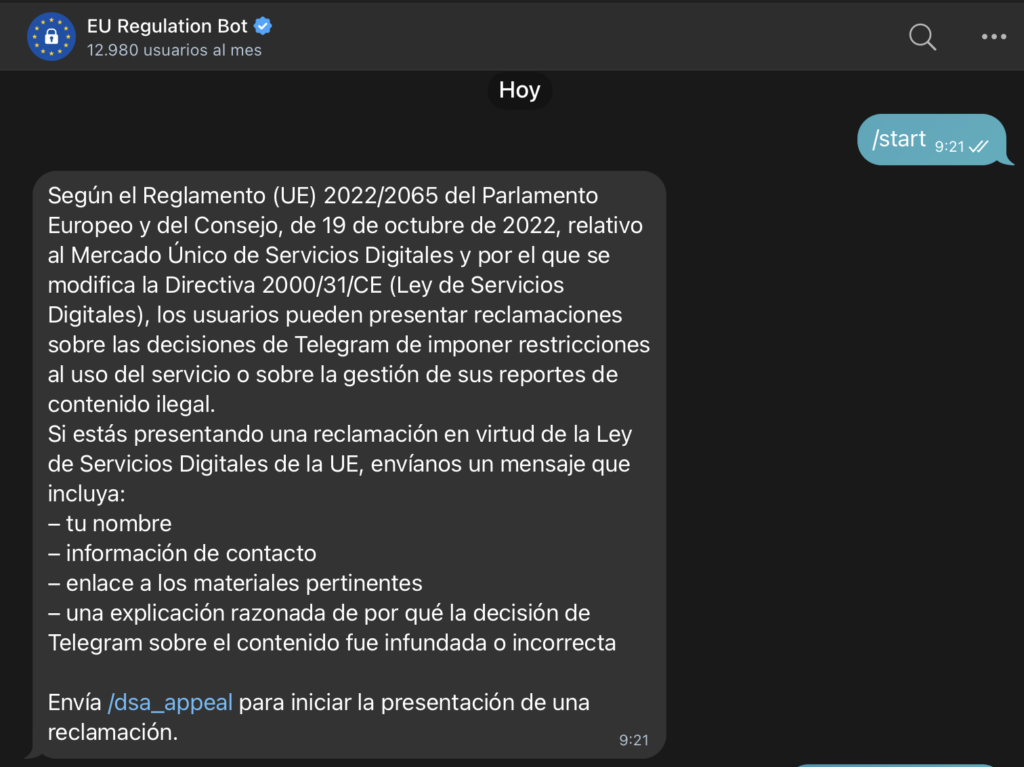

Fortunately, later this morning, our group was restored. They did this without sending us any notification or explanation whatsoever following our appeal; it simply became active again. While this is a huge relief, the shock of seeing years of work vanish in a second forces me to raise the alarm about a severe structural flaw in the platform.

I want to make one thing absolutely clear: the content posted was CSAM (child sexual abuse material). These atrocities must be relentlessly prosecuted and eliminated. The problem isn’t that Telegram takes action against it; the problem is why they do it with such blind automation that leaves you completely defenseless.?

The explanation lies in the new European legislation, specifically the Digital Services Act (DSA) and strict EU regulations to combat CSAM. These laws force major platforms to remove illegal content almost immediately. The implications are severe: failure to do so results in multi-million euro fines and even direct criminal liability for executives, something Telegram’s founder already experienced in 2024.

To comply with this law and avoid risks, Telegram has implemented a «zero tolerance» automated moderation system that shoots first and asks questions later. This poorly executed, nuance-free implementation has created the perfect attack vector. Today, an attacker doesn’t join your group to participate; they join to inject illegal material, trigger the automated reporting bots, and kill your community.?

And this brings us to the root of the problem. For years, Telegram has failed to implement any real improvements to its moderation systems and user controls for administrators. Instead, they have poured all their resources into integrating crypto assets, monetization features, mini-apps, and things that regular people never asked for. They are deep into a clear process of enshittification, progressively worsening the platform to extract more value, which, sooner or later, will take its toll on them.?

Given this situation, at VallaBus and other groups I help coordinate are analyzing what definitive measures we can take to bulletproof ourselves in the future. For now, we have temporally restricted the sending of photos and videos in the group.

Update: The only solution found so far is to set up a custom bot in your group that allows you to delete photos and videos from users who have not been active for a certain period of time. I was able to implement this because I have bots created with n8n, but it is a tedious task that is not within the reach of every group administrator.

My urgent recommendation to any administrator of a public Telegram group is to do exactly the same: restrict the sending of media files immediately. Keep this restriction in place until you find a solution (through bots or settings) that allows you to grant image-sending permissions solely and exclusively to trusted, veteran users.

To be clear, I’m not asking for less control over illegal content. I’m asking that this control not be so poorly designed that it allows anyone to destroy the hard work and legitimate communities of others with total impunity, all while the platform looks the other way counting its cryptocurrencies.